Age Eleven: Never Again

88. King Abdullah

Abdullah bin Abdul Al Saud (1924–2015) was the King of Saudi Arabia from 2005 until his death in 2015. He was the son of Ibn Saud, the founder (and namesake) of modern Saudi Arabia. He was named crown prince when his brother Fahd became king in 1982. When King Fahd had a debilitating stroke in 1995, Abdullah became the de facto ruler and then formally became king when Fahd died a decade later.

King Abdullah bin Abdul al-Saud in January 2007

Although Saudi Arabia ranks very low on the democracy-authoritarianism and human rights scales (see entry for Saudi Arabia), King Abdullah was a relatively moderating influence. Late in his reign he gave women the right to vote for municipal councils and to compete in the Olympics. He encouraged high quality western style education for both men and women for at least some citizens. Although maintaining Sharia law (a strict legal system based on Islamic principles), he introduced professional training for judges and a review system for decisions. He took steps to moderate the conduct of the Mutaween, the religious police who enforce public dress, attendance at prayer, and other behaviors.

He started an entrepreneurial sector in what had been a stifling process for starting new businesses. He founded King Abdullah University for Science and Technology with a relatively modern curriculum and providing equal opportunity for both genders. He modernized the education system at all grade levels to prepare the population for STEM (science, technology, engineering, and math) fields.

Abdullah maintained a positive political and military relationship with the United States. The US needs Saudi Arabian oil and Saudi Arabia’s moderating influence in OPEC (the Organization of Petroleum Exporting Countries which has influence on world oil prices) while Saudi Arabia in turn needs US military and intelligence support to survive in a volatile region of the world.

Abdullah married about 30 times and had more than 30 children. His personal fortune was estimated at $18 billion, although there is no clear division between assets of the royal family and the assets of the nation.

Abdullah took steps towards interfaith dialogue. He was the first Saudi Arabian leader to meet with the Pope (Benedict XVI) and led a number of interfaith meetings calling for tolerance in interfaith relations.

![]()

In the alternative reality of Danielle: Chronicles of a Superheroine, King Abdullah is a fan of eleven-year-old Danielle and asks for her guidance to move his nation toward more modern values, including equality of men and women.

How You Can Be a Danielle and help promote peace in the Middle East.

89. Saudi Arabia

Saudi Arabia is the second biggest Arab nation by land mass, adjacent to eight other Arab nations, and with a coast bordering both the Red Sea, the Persian Gulf. It has a population of about 20 million Saudi citizens and eight million foreigners who service the country’s leadership and its oil industry. Most of its land consists of barren deserts.

It houses the two holiest places in Islam: Al-Masjid al-Haram in Mecca, and Al-Masjid an-Nabawi in Medina. Founded by the nation’s namesake Ibn Saud in 1932, it is an absolute hereditary monarchy. All Saudi citizens are required to be Muslims and the primary form of Islam practiced in the country is Wahhabism, also called Salafism, a very strict form of Islam.

As of 2015, Saudi Arabia was the world’s largest oil producer. However, the United States has increasingly perfected the extraction of oil from shale oil rock, which consists of stones with a very low concentration of oil and natural gas. This has led to a dramatic increase in American oil production and a substantial reduction in oil prices, and it has led to the United States overtaking Saudi Arabia in overall oil production in 2016. The Saudi economy is almost exclusively based on oil production, although under King Abdullah (see entry for King Abdullah), major investments have been made in preparing the populace for careers in STEM (science, technology, engineering, and mathematics) fields. Of the 20 largest economies in the world, they are the only Arab nation, based due to its fossil fuels economy.

Freedom House, founded in 1941, is a US government funded nongovernmental research organization that evaluates democracy, political freedom, and human rights. It rates Saudi Arabia with its lowest rating, “Not Free.” The Economist ranks the Saudi government as the fifth most authoritarian government out of 167 nations.

There were attempts by King Abdullah to reform the authoritarian form of Saudi government including encouragement of public policy debates, municipal elections, the appointment of the first woman to a ministerial post, and other changes. Abdullah transferred control of girls’ education from the Ulema (an organization of Islamic religious leaders) to the Ministry of Education. Critics have complained that these modernizations have been too slow and ineffective.

Capital punishment, usually by beheading, is carried out at a high rate (345 times between 2007 and 2010) for such offenses as murder, robbery, drug use, apostasy (the abandonment or renunciation of Islam), adultery, homosexuality, witchcraft, and sorcery. This record has been condemned by Amnesty International, Human Rights Watch, and other human rights organizations.

Saudi Arabia has been a long-term political and military ally of the United States, which has inspired terrorist groups such as al-Qaeda to oppose the Saudi government and its leaders.

![]()

In the alternative reality of Danielle: Chronicles of a Superheroine, King Abdullah seeks Danielle’s help to accelerate the modernization and reform of Saudi Arabia with varying levels of success.

See entries for King Abdullah, Sovereign funds, and Madrassa schools.

How You Can Be a Danielle and help promote peace in the Middle East.

90. Sovereign funds

A sovereign wealth fund (SWF) is a financial fund that manages the accumulated wealth of a nation. These funds are generally very diversified with investments in many types of assets including stocks, bonds, real estate, commodities, and other funds such as private equity funds, hedge funds, and venture capital funds. At the end of 2014, sovereign wealth funds totaled $7.11 trillion worldwide, of which $4.29 trillion was from countries whose wealth came primarily from oil and gas exports.

![]()

In the alternative reality of Danielle: Chronicles of a Superheroine, Danielle discusses with King Abdullah the disposition of Saudi Arabia’s sovereign wealth fund, which at the end of 2014 totaled $672 billion. As part of its economic and strategic alliance with the United States, the Saudi SWF invests most of its money in US Treasury bonds which are safe but pay very low interest rates. Other Arab SWFs generally invest in higher-risk and higher-yield securities. With the collapse of oil prices in 2015, Saudi Arabia has been running a budget deficit. One of its principal investors, Prince Alwaleed bin Talal, called for a change in investment strategy toward higher yield investments that he argues would cover most of the budget deficit. However, Finance Minister Ibrahim Alassaf has rejected this advice.

See entries for Saudi Arabia and Madrassa schools.

91. Madrassa schools

The word Madrassa simply means “school of learning” in Arabic. However, the term usually refers to Islamic education institutions.

The type of Madrassa that Danielle talks to Saudi King Abdullah about are the schools funded by Saudi Arabia that teach Wahhabism, the dominant faith in Saudi Arabia and an austere and severe form of Islam. Wahhabis believe that it is not sufficient for a person to simply practice Islam, but that anyone who does not practice the Wahhabist form of Islam should be regarded as an enemy. Many Wahhabists believe such heathens should be destroyed.

Wahhabist schools represent the primary source of education in Saudi Arabia and since the 1970s Saudi Arabia and affiliated charities have promoted and supported Wahhabist Madrassa schools across the world, including in the United States. Critics estimate that the level of Saudi funding for Madrassa schools outside Saudi Arabia over the past twenty years has been over $100 billion.

A major part of the curriculum in many Madrassa schools consists of rote reading of the Qur’ān (Koran), the holy book of Islam. The belief is that simply reading the words of the Qur’ān even without understanding them will impart wisdom. This reflects, in part, Islam’s emphasis on maintaining the exact words of the Qur’ān without modification since its inception, which contrasts with the Jewish and Christian Bible which has been repeatedly updated to modern languages.

Radical Islamic terrorist groups such as al-Qaeda, the Taliban, and ISIS have their roots in the Wahhabist faith and many of their leaders and members have been educated in Wahhabist Madrassa schools. Bin Laden appears to have been educated in schools organized by the Muslim Brotherhood, a radical Islamic group in Egypt, although Saudi King Faisal gave support to the Muslim Brotherhood and recruited their teachers for the Saudi Madrassa schools. Although the Saudi government claims to be opposed to al-Qaeda, ISIS, and affiliated terrorist groups, critics say that their opposition is tepid and that they continue to provide major funding for the Madrassa schools that support the ideology of these groups.

![]()

In the alternative reality of Danielle: Chronicles of a Superheroine, Danielle encourages King Abdullah to reform the Saudi supported Madrassa schools to provide a more diverse and tolerant education.

See entries for King Abdullah, Saudi Arabia, and bin Laden.

How You Can Be a Danielle and help promote peace in the Middle East.

92. Female genital mutilation (FGM)

Note: Parental Guidance is suggested for children under 13 for this entry.

In 1997 the World Health Organization defined Female Genital Mutilation (FGM) as the “partial or total removal of the external female genitalia or other injury to the female genital organs for non-medical reasons.”

These “non-medical reasons” include religious (primarily Islamic), cultural, and aesthetic traditions that go back hundreds, and in some cases thousands of years, and remain very active in today’s world. It is often carried out as part of a girl’s coming of age ceremony but may be done as early as infancy or after puberty.

FGM is an extremely painful procedure and is typically performed in unsterile conditions using razor blades, knives, pieces of glass, scissors and/or sharp stones, and with the girl forcibly restrained.

The procedure differs somewhat from country to country depending on the cultural traditions governing the FGM ritual. It generally involves removal of the clitoral hood and clitoral glans and removal of the inner labia. To bind the resulting wounds, such materials as agave or acacia thorns are typically used instead of surgical thread.

About 20 percent of FGM procedures are of the most severe form, known as infibulation, which includes removal of the inner and outer labia and closing of the vulva with a small hole left open for the passage of urine and menstrual fluid. In this case, the vagina is subsequently opened with another painful procedure for intercourse and for childbirth.

Somali poetess Dahabo Musa describes the “three feminine sorrows” associated with infibulation (the original FGM procedure, the wedding night, and childbirth) in a 1988 poem (See femaleintegrity.se/poem.htm).

Effects may include swelling, excessive bleeding, chronic pain, infections, anemia, tetanus, gangrene, damage to surrounding organs, necrotizing fasciitis (flesh-eating disease), and others. The crude instruments that are typically reused between different girls may aid in the transmission of a variety of diseases, including hepatitis B, hepatitis C, and possibly HIV.

Numerous studies have shown that FGM is extremely harmful to women’s physical and emotional health throughout their lives. Long-term effects include chronic infections, pain during menstruation and sexual intercourse, HIV, and many others.

UNICEF estimated that in 2014 there were 133 million girls and women who had undergone FGM earlier in their lives with three million girls experiencing it each year. The 2008 Demographic Health Survey found that 91 percent of women aged 15 to 49 in Egypt had undergone FGM. FGM is prevalent in Saudi Arabia although exact numbers are not available.

The numbers are significant even in western countries due to a large Diaspora population of the cultures that practice it. An unpublished 2015 study by the Centers for Disease Control estimated that a half million women and girls in the United States had undergone FGM or were likely to have the procedure. The European Parliament estimated in 2009 that half a million women in Europe had undergone it.

Although the practice is generally carried out by women, their motivation is often to spare the girls from social exclusion by men who will otherwise reject and ostracize them.

There has been an international movement to end FGM since the 1970s. In 2003, the United Nations designated every February 6th as an “International Day of Zero Tolerance to Female Genital Mutilation.” In 2012 the United Nations General Assembly voted unanimously to recognize FGM as a violation of human rights. As of 2015, the practice is nominally outlawed (or in some cases merely restricted) in most countries. However, these laws are almost never enforced, and they have had limited effect on lowering these numbers. The incidence of FGM rituals each year as a percentage of the population has declined slightly in recent decades, but the total number (of new procedures each year) is actually climbing because population growth is outpacing the small percentage reduction in the practice.

Remarkably, there has been a reaction against the FGM eradication movement led by anthropologists who accuse the anti-FGM movement of cultural colonialism by placing their cultural ideas above the centuries old traditions of the cultures who practice FGM. For example, Sylvia Tamale, a Ugandan law professor, writes that western opposition to FGM stems from “a Judeo-Christian judgment that African sexual and family practices are primitive and require correction.” She goes on to write that African feminists “do not condone the negative aspects of the practice but take strong exception to the imperialist, racist, and dehumanizing infantilization of African women.” Even those who oppose FGM commonly encourage a high degree of sensitivity to the cultures that practice it. The result of this push back by factions of anthropologists has largely succeeded in quieting the anti-FGM movement.

On a personal note, I saw the wildly successful musical The Book of Mormon, written by Trey Parker and Matt Stone, creators of the animated comedy South Park, along with Robert Lopez, co-composer and co-lyricist of Avenue Q and Frozen. I did find some of the intentionally offensive, irreverent and politically incorrect dialogue and lyrics humorous, but the musical lost me in its treatment of FGM. In one of the songs, “Hasa Diga Eebowai” (which translated literally into English from Ugandan means “F*** you God!”), the cast enthusiastically sings “All the young girls here get circumcised! Their clits get cut right off! Dayyyyyo!!” The musical has been praised by some for bringing the issue of FGM to a wider audience, but in my view this effort was unsuccessful judging by the unrestrained laughter that these lyrics (and other lyrics about FGM) engendered. I do think it is possible to create humor from serious issues, but my sense in this case was that the audience was laughing as a result of their uncorrected ignorance of how severe an issue FGM is in the real world.

![]()

In the alternative reality of Danielle: Chronicles of a Superheroine, in her speech to the United Nations General Assembly (having been invited by King Abdullah to take the Saudi Arabian speaking spot), eleven-year-old Danielle begins by citing a reason that people do not speak about FGM other than the multiculturalist pushback from anthropologists and some feminists. She describes FGM as an egregious form of female and child abuse and the fight to end FGM as a matter of fundamental human rights. She decries the failure of the feminist movement to give a priority to ending it. Danielle points out that there is essentially no public discussion of this issue despite the fact that millions of girls undergo this degrading and barbaric procedure each year.

She also condemns the phrase “female circumcision” saying that this is not a benign synonym for FGM but rather a “grotesque and inexcusable distortion of language.” She goes on to say that “The proper analogy to male anatomy would be female castration.”

How You Can Be a Danielle and help eradicate female genital mutilation.

93. Cholera

Cholera is a bacterial infection caused by the bacterium Vibrio cholerae. If treated appropriately, it can be readily cured with antibiotics, electrolyte rebalancing, and oral rehydration, but in poor areas without basic healthcare, its effects can be severe with death rates over 50 percent. With proper treatment, death rates can be held to less than one percent.

Cholera is spread by infected seafood, water and food contaminated with human feces, and poor sanitation. Prevention efforts generally focus on establishing proper sanitation.

Figures from the World Health Organization is that there are 1.3 to 4.0 million cases of cholera each year with 21,000 to 143,000 deaths each year from the disease. This is a big improvement compared to the 1980s when death rates were estimated at over three million persons a year.

The worst epidemic of cholera in recent history was in Haiti after the devastating earthquake there in 2010. The epidemic started in a United Nations health center and quickly spread to all regions. By 2015, over 700,000 Haitians had become ill with cholera. Due to intensive efforts at treatment by Haitian and international health organizations, the death rate has been held to fairly low levels with an estimated 9,000 deaths.

![]()

In the alternative reality of Danielle: Chronicles of a Superheroine, after losing her Mum in the Haitian earthquake, Danielle’s sister Claire becomes heavily involved in successful efforts to combat cholera in Haiti.

How You Can Be a Danielle and promote world health.

94. United Nations General Assembly

The General Assembly (GA) is the one organ of the United Nations that all member nations participate in. Its one-vote-per-nation rule allows nations with only five percent of the world population to pass a resolution by a two-thirds margin.

The General Assembly has little formal power in that its resolutions are not binding on its members. Binding resolutions on significant security issues must be passed by the Security Council (which has five permanent members who wield a veto and 10 non-permanent members). The General Assembly is influential, however, in that speeches to the General Assembly receive worldwide attention, and the GA is often a focal point for diplomacy on a wide range of world issues.

The most important formal function of the GA is that it appoints the non-permanent members of the Security Council and is responsible for the overall budget of the United Nations.

The first session of the General Assembly was in 1946 and included 51 nations. Today there are 193 nations represented.

![]()

In the alternative reality of Danielle: Chronicles of a Superheroine, the eleven-year-old Danielle addresses the General Assembly taking the Saudi Arabian speaking spot having been invited by King Abdullah. Her speech focusing on Female Genital Mutilation (see the entry for Female Genital Mutilation) and the rights of girls results in controversy and a backlash.

How You Can Be a Danielle and help promote peace in the Middle East.

95. Female circumcision

“Female circumcision” is a phrase often used to refer to Female Genital Mutilation.

![]()

In the alternative reality of Danielle: Chronicles of a Superheroine, eleven-year-old Danielle condemns the phrase female circumcision in her speech to the United Nations General Assembly as a “grotesque and inexcusable distortion of language.”

See entry for Female genital mutilation.

How You Can Be a Danielle and help eradicate female genital mutilation.

96. Realpolitik

Realpolitik is a term for politics, diplomacy, and strategic thinking based primarily on considerations of military and financial power rather than moral, ethical, or ideological factors. This approach can be considered to be simply pragmatic, or more pejoratively coercive, intimidating, and amoral.

The word comes from an 1853 book by German politician Ludwig von Rochau, Grundsätze der Realpolitik angewendet auf die staatlichen Zustände Deutschlands, who wrote:

|

The study of the powers that shape, maintain and alter the state is the basis of all political insight and leads to the understanding that the law of power governs the world of states just as the law of gravity governs the physical world. |

![]()

In the alternative reality of Danielle: Chronicles of a Superheroine, Claire comments on Danielle’s relationship to considerations of Realpolitik in her interactions with world issues.

97. National Organization for Women

The National Organization for Women (NOW) is a prominent US based feminist organization founded in 1966.

The precursors to the founding of NOW include the President’s Commission on the Status of Women, organized by President Kennedy in 1961 with Eleanor Roosevelt as the head. This was followed by the publication of American author and activist Betty Friedan’s (1921–2006) highly influential book The Feminine Mystique in 1963. The Civil Rights Act, which prohibited sexual discrimination, was passed in 1964, but quickly led to frustration by feminists due to the lack of enforcement of its gender provisions.

In an interview after publishing The Feminine Mystique, Betty Friedan said

|

… I realized that it was not enough just to write a book. There had to be social change. And I remember somewhere in that period coming off an airplane [and] some guy was carrying a sign … It said, “The first step in revolution is consciousness.” Well, I did the consciousness with The Feminine Mystique. But then there had to be organization and there had to be a movement. |

Friedan became the cofounder and first president of NOW at its organizing conference in Washington, DC on October 29, 1966. The first paragraph of the NOW Statement of Purpose, which Friedan drafted on a napkin, reads:

Betty Friedan in 1963

|

We, men and women, who hereby constitute ourselves as the National Organization for Women, believe that the time has come for a new movement toward true equality for all women in America, and toward a fully equal partnership of the sexes, as part of the worldwide revolution of human rights now taking place within and beyond our national borders. |

My family has been involved with the feminist movement going back to the founding by Regina Stern, my mother’s mother’s mother, of the Stern Schule in Vienna 1868. It was the first school in Europe that provided higher education for girls. If a girl was lucky enough to get an education at all in the mid-nineteenth century in Europe, it went only through ninth grade. The Stern Schule went through fourteenth grade. Her daughter, my grandmother Lillian Bader, became the first woman in Europe to be awarded a PhD in chemistry and subsequently took over the school (see entry for The Stern Schule).

![]()

In the alternative reality of Danielle: Chronicles of a Superheroine, in her speech to the United Nations General Assembly, the eleven-year-old Danielle criticizes NOW and other American feminist organizations for their lack of emphasis and priority on ending Female Genital Mutilation (see entry for Female genital mutilation).

How You Can Be a Danielle and help promote equal rights for women.

98. China’s one-child policy

China’s one-child policy refers to a set of laws introduced between 1978 and 1980 in China that made it illegal in most circumstances to have more than one child. There were exceptions for certain minority groups, and, in later years, for certain portions of the population parents were allowed to have a second child if their first child was a girl.

The early history of communist China saw the population almost double. Under Mao Tse-tung, infant mortality declined from 227 of every 1000 births (a quarter of all births) to 53 in 1981, life expectancy increased from 35 to 66, and the government encouraged large families because it believed that this would make the nation stronger. As a result, China’s population went from 540 million in 1949 to 940 million in 1976.

In the late 1970s, western writers and organizations such as the Club of Rome and the Sierra Club were predicting a worldwide catastrophe caused by overpopulation. The thinking of Chinese leaders was heavily influenced by these views, especially the ideas contained in two popular books, The Limits to Growth by Dennis Meadows, Donella Meadows, Jørgen Randers, and William W. Behrens III, and A Blueprint for Survival by Edward Goldsmith, which were widely read by the Chinese leadership. The one child policy was adopted by the Chinese Communist Party in 1979.

The policy has been heavily criticized for the resulting forced sterilizations, forced abortions, and strict control over birth permits. The biggest controversy is what happened to tens of millions of missing girls. The ratio of boys to girls at birth in China has been around 117 to 100 since the one-child policy was instituted. According to China’s National Population and Family Planning Commission, there will be 30 million more men than women in China by 2020, which will lead to problems of social instability. In a report by the Canadian Broadcasting Corporation, “the [Chinese] government … has expressed concern about the tens of millions of young men who won’t be able to find brides and may turn to kidnapping women, sex-trafficking, other forms of crime or social unrest.”

Although sex-specific abortion, child abandonment, and infanticide are illegal in China, the US State Department, the British Parliament, and the human rights organization Amnesty International have each claimed that China’s one child policy has contributed to infanticide. Amartya Sen, quoted in 2013 in the Georgetown Journal’s Guide to the One-Child Policy said, “The one-child policy has led to the ‘Missing Women,’ or the 100 million girls ‘missing’ from the populations of China and other developing countries as a result of female infanticide, abandonment and neglect.” University of California at Davis scientist G. William Skinner and researcher Yuan Jianhua have written that infanticide occurred fairly frequently in China before the 1990s.

American social scientist and China expert Steven W. Mosher wrote in his 1993 book A Mother’s Ordeal, that it was not uncommon for Chinese women to have their children killed right before or after birth.

In Mosher’s 2008 book, Population Control, Real Costs, Illusory Benefits, Mosher summarizes that

|

… those who argue for [overpopulation’s] premises conjure up images of poverty—low incomes, poor health, unemployment, malnutrition, overcrowded housing to justify antinatal programs. The irony is that such policies have in many ways caused what they predicted—a world which is poorer materially, less diverse culturally, less advanced economically, and plagued by disease. The population controllers have not only studiously ignored mounting evidence of their multiple failures, they have avoided the biggest story of them all. Fertility rates are in free fall around the globe. |

About half of the “missing women” in China in the 1980s and 1990s are accounted for by the common practice of Chinese female babies and young children having been adopted by parents in other countries. Another common phenomenon called “heihaizi” (meaning black child) refers to children (mostly girls) born outside of the one-child policy who are not registered with the Chinese National Household Registration System. These children do not legally exist and thus are not eligible for such services as education, health care, and protection under the law.

Recent research studies indicate that the abandonment and killing of baby girls has become rare compared to earlier periods of time due to stricter enforcement of laws against the practice and increasing numbers of exceptions to the one-child policy.

In 2015, the Chinese Government announced the end of the one-child policy instituting instead a two-child policy. Parents will still need to obtain a license to have each child. The government offered no reasons for the change and claimed that the one-child policy was successful and prevented 400 million births. The validity of this claim has been widely disputed. For example, Mei Fong, former journalist for the Wall Street Journal and winner of the Pulitzer Prize, writes in her 2015 book, One Child: The Past and Future of China’s Most Radical Experiment:

|

The reason China is doing this right now is because they have too many men, too many old people, and too few young people. They have this huge crushing demographic crisis as a result of the one-child policy. And if people don’t start having more children, they’re going to have a vastly diminished workforce to support a huge aging population. |

![]()

In the alternative reality of Danielle: Chronicles of a Superheroine, eleven-year-old Danielle criticizes China’s one-child policy as having been a disaster for girls. Claire debates Danielle’s message with the president of the National Organization for Women.

How You Can Be a Danielle and promote democracy in China.

99. Benjamin Netanyahu

Benjamin Netanyahu (born in 1949), nicknamed “Bibi,” was elected as the 9th and 13th prime minister of Israel and is serving as prime minister as of 2015. He has been elected prime minister four times, matching the record of David Ben-Gurion, Israel’s first prime minister.

Benjamin Netanyahu speaking to the United Nations General Assembly in 2014

Between 1967 and 1972, Netanyahu served in the Israeli military, became a team leader of a special forces unit, and was injured in 1972.

He attended the Massachusetts Institute of Technology in the 1970s and received Bachelor of Science and Master of Science degrees. He was in an MIT class that I lectured to in the 1970s on emerging technologies.

After graduating from MIT, he was a consultant for the Boston Consulting Group. In 1978 he returned to Israel where he founded the Yonatan Netanyahu Anti-Terror Institute. It was named after his brother, Yonatan Netanyahu, who, in 1976, led Operation Entebbe, in which 100 Israeli Commandos carried out a successful and daring rescue in Uganda to free 102 mostly Israeli hostages. Yonatan Netanyahu was the only Israeli death resulting from the mission.

Benjamin Netanyahu became the leader of the Likud party, the major center-right secular party in Israel, and was elected prime minister in 1996 serving for three years.

He became the Foreign Affairs Minister and Finance Minister in Ariel Sharon’s Likud led government between 2002 and 2005. As Minister of Finance, he led a major reform effort away from Israel’s (democratic) socialist orientation and towards the creation of a robust entrepreneurial sector. He was widely credited with having dramatically boosted the Israeli economy which cemented his leadership of the conservative political and economic factions in Israel.

In 2005 Sharon left Likud to form Kadima, a new center-right party, and Netanyahu again became the leader of the Likud party. In 2009 he again was elected prime minister and has served in that position since that time.

Part of his coalition includes smaller parties pressing for expanding settlements in the West Bank and his concessions to these parties has created tension with the United States and Europe. He has taken the position that in any peace settlement with the Palestinians, Israeli settlers in the West Bank should be allowed to stay in their settlements under Palestinian rule.

Netanyahu’s second and third terms as prime minister have been characterized by unwavering opposition to Iran obtaining a nuclear weapon. In speeches in 2009, he said, “Iran is seeking to obtain a nuclear weapon and constitutes the gravest threat to our existence since the war of independence.” He added, “The Iranian regime is motivated by fanaticism … They want to see us go back to medieval times. The struggle against Iran pits civilization against barbarism. This Iranian regime is fueled by extreme fundamentalism.”

The Obama administration’s negotiation and agreement with Iran to place restrictions on Iran’s nuclear program in exchange for a $150 billion relief of western sanctions has led to significant tension between the Netanyahu and Obama administrations. A poll of the Israeli public in late 2014 showed that the overwhelming majority of Israelis believe the relationship between Israel and the US has been hurt by the poor relationship between Obama and Netanyahu.

In 2009, I met with Prime Minister Netanyahu in his office after having engaged in an onstage dialogue with Israeli President Shimon Peres at the Israeli Presidential Conference in Jerusalem. Netanyahu remembered having attended my class in the 1970s. I reported to him on a study that Larry Page, cofounder of Google, and I had conducted on emerging energy technologies for the US National Academy of Engineering. Our study had concluded that the number of watts of solar energy produced worldwide was doubling every two years (and had been for twenty-five years) and was only eight more doublings from meeting all of the world’s energy needs. Netanyahu asked, “But do we have enough sunlight to do this with?” I responded that we have 10,000 times more than we need. In other words, after doubling eight more times and meeting all of the world’s energy needs (in less than twenty years), we would be using only one part in 10,000 of the free sunlight that falls on the Earth. Based on our discussion, he announced at the Israeli Presidential Conference the next day the organization of an Israeli institute to accelerate the use of solar energy and other emerging renewable energy sources.

![]()

In the alternative reality of Danielle: Chronicles of a Superheroine, eleven-year-old Danielle meets with Prime Minister Netanyahu in his office. This is their first conversation:

|

“What is a nice Jewish girl from Los Angeles doing running Saudi Arabia?” he asked her. “I’m not exactly running it,” she replied. “Looks like that to me.” “Yeah, well what is a nice engineer from MIT doing running Israel?” “Ha, have you seen my coalition? You have more influence on Saudi Arabia than I do on Israel.” |

Danielle continues to negotiate with Netanyahu as part of her effort to promote her peace plan for the Middle East. She agrees with Bibi that Israeli settlers should be allowed to stay in the West Bank under Palestinian rule.

How You Can Be a Danielle and help promote peace in the Middle East.

100. Yad Vashem

Yad Vashem, Israel’s official memorial to the Holocaust and its victims, was opened in 1953, five years after the founding of the State of Israel. It is located on the Mount of Remembrance in Jerusalem. The primary feature of Yad Vashem is the Holocaust History Museum, but it also houses memorial sites including the Children’s Memorial, the Hall of Remembrance, the Museum of Holocaust Art, and an educational center called the International Institute for Holocaust Studies.

The entrance to the Yad Vashem Holocaust Museum overlooking Jerusalem

About one million people visit Yad Vashem each year, which is second in popularity only to the Western Wall, which is a small surviving piece of the western retaining wall of the Second Jewish Temple, and the holiest site in Judaism. The Western Wall sits on a hill known as the Temple Mount which is holy to Jews, Christians, and Muslims.

A key objective of Yad Vashem has been to honor gentiles who, “at great personal risk” and without a selfish motive, saved Jewish lives from the Holocaust genocide.

These heroes are honored in a section of Yad Vashem called the Garden of the Righteous Among the Nations.

Yad Vashem seeks to maintain the memory and history of the six million Jews murdered in the Holocaust and holds ceremonies of remembrance, supports research on Holocaust history, and preserves a vast number of memoirs, documents, photographs, artifacts, and diaries.

The original museum in 1957 focused on uprisings in the Warsaw Ghetto and the Sobibor and Treblinka death camps. In 2005, a new and far more comprehensive museum opened replacing the original, after twelve years of development. It contains 10 linked exhibition halls, each devoted to a chapter of the Holocaust story. Avner Shalev, Yad Vashem curator, puts it this way:

|

[Yad Vashem] looks into the eyes of the individuals. There weren’t six million victims, there were six million individual murders … The museum serves as an important signpost to all of humankind, a signpost that warns how short the distance is between hatred and murder, between racism and genocide. |

I visited the old Yad Vashem museum in 1985 and 1995 and the new museum in 2009 and found each visit to be a deeply informative and moving experience.

![]()

In the alternative reality of Danielle: Chronicles of a Superheroine, eleven-year-old Danielle and nineteen-year-old Claire both wear black dresses when they visit Yad Vashem, and Danielle shares her perspective on the Holocaust with a world audience.

See entries for Hitler’s Final Solution, the Eternal Flame, the Hall of Remembrance, and the Hall of Names.

How You Can Be a Danielle and prevent future genocides.

101. Holocaust

The Holocaust refers to the organized Nazi program to systematically exterminate the Jews during World War II. This act of genocide resulted in the killing of six million Jews, which was two thirds of the Jewish population in Europe, plus an additional five to nine million non-Jews.

See entry for Hitler’s Final Solution.

How You Can Be a Danielle and prevent future genocides.

102. Eternal Flame

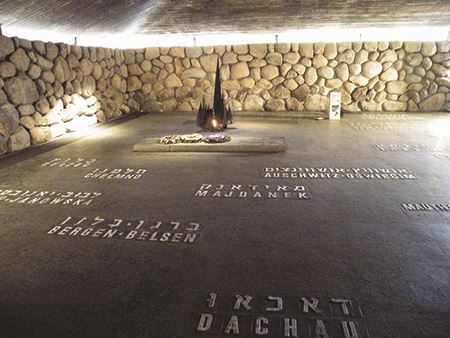

The Eternal Flame in the Yad Vashem Holocaust Memorial

The Eternal Flame at the Yad Vashem Holocaust Memorial contains an inextinguishable flame in what appears to be a broken bronze goblet. The flame provides the light for the Hall of Remembrance. Next to it is a stone crypt with the ashes of Holocaust victims from the death camps, and arrayed around the flame are the names of the concentration and death camps in Hebrew and English.

![]()

In the alternative reality of Danielle: Chronicles of a Superheroine, Danielle and Claire place 15 stones by the Eternal Flame commemorating the 15 million victims of the Holocaust.

See entries for Hitler’s Final Solution, Yad Vashem, the Hall of Remembrance, and the Hall of Names.

How You Can Be a Danielle and prevent future genocides.

103. Hall of Remembrance

The Hall of Remembrance at the Yad Vashem Holocaust Memorial, dedicated in 1961, has become the focal point for commemoration and prayer for the victims of the Holocaust.

Pope Francis prays after laying a wreath in the Hall of Remembrance at the Yad Vashem Holocaust Memorial on May 26, 2014

![]()

In the alternative reality of Danielle: Chronicles of a Superheroine, it is at the Hall of Remembrance that the eleven-year-old Danielle shares her thoughts on the Holocaust with a world audience.

See entries for Hitler’s Final Solution, Yad Vashem, the Eternal Flame, and the Hall of Names.

How You Can Be a Danielle and prevent future genocides.

104. Shoah

Shoah is a biblical word that means “destruction.” As early as the 1940s, it became the Hebrew term to refer to the Holocaust.

See entry for Hitler’s Final Solution for the story of the Shoah, or Holocaust.

How You Can Be a Danielle and prevent future genocides.

105. Hall of Names

The Hall of Names in the Yad Vashem Holocaust Museum is a memorial devoted to each individual Jewish victim. It symbolizes their individuality by displaying 600 individual photographs in a large cone above the hall. There is a lower cone containing water that reflects these images representing victims whose names are unknown.

The Hall of Names also contains an archive with over two million pages of written testimony, over 100,000 testimonials in audio, video, and other forms, as well as a computerized data bank.

The Hall of Names in the Yad Vashem Holocaust Museum

![]()

In the alternative reality of Danielle: Chronicles of a Superheroine, during her speech to a world audience, the eleven-year-old Danielle shares her reaction to seeing a woman in the Hall of Names who is still grieving for her lost child.

See entries for Hitler’s Final Solution, Yad Vashem, the Eternal Flame, and the Hall of Remembrance.

How You Can Be a Danielle and prevent future genocides.

106. Hannah Arendt

Johanna “Hannah” Arendt (1906–1975) was a German-born American social philosopher although she preferred the description “political theorist.”

Portrait of German-born American political theorist and author, Hannah Arendt in 1949

In the 1920s, Arendt, while a student at the University of Freiburg, began a romantic relationship with Martin Heidegger, a highly influential (and married) German philosopher and rector at the university. She became troubled by reports that he was giving speeches at meetings of the National Socialist (Nazi) party. She asked him to deny these reports which he declined to do, replying instead that he still had romantic feelings for her. She ended their relationship shortly after that in 1932.

Arendt was denied a professorship in Germany because she was Jewish. She conducted research on anti-Semitism and was arrested by the Nazi Gestapo in 1933, and released after a brief imprisonment. She fled Germany that year for Czechoslovakia, then moved to Geneva, Switzerland, finally settling in Paris, France, where she helped Jewish refugees. After Germany conquered France, she was deported by the Vichy Regime (the government of France after its defeat by the Nazis and widely regarded as a Nazi puppet government) to Camp Gurs in southwest France, an internment camp for “enemy aliens.” She managed to be released after a few weeks, obtained an illegal visa to travel to Portugal, and ended up settling in the United States. After World War II, she returned temporarily to Germany and worked for the Zionist organization Youth Aliya, which saved thousands of children from the Holocaust and helped settle them in the British Mandate of Palestine (a portion of which became Israel in 1948).

She became a naturalized citizen of the United States in 1950 and rekindled her romance with Heidegger for two years. At that time, she defended Heidegger from severe criticism for his role as a supporter of the Nazis during World War II saying that he had been naïve, and that in any event his philosophy was not related to that of the Nazis. Her relationship with and defense of Heidegger earned her considerable criticism.

Arendt’s first major book, The Origins of Totalitarianism, published in 1951, took equal aim at Stalinism and Nazism and was opposed by the American Left for portraying the two movements as moral equivalents.

She was chosen to report on the 1961 trial of Adolf Eichmann for The New Yorker. Eichmann, who has been called the architect of the Holocaust, was captured in Argentina by Mossad, Israel’s intelligence service, in 1960, was put on trial in Israel and found guilty of war crimes and hanged in 1962. Her report was expanded into her most influential book, Eichmann in Jerusalem: A Report on the Banality of Evil, and published in 1963.

The primary message of the book is summed up in the last three words of the title. Arendt was examining the nature of human evil and concludes that evil can result from a failure to question the ideas and values that are prevalent in one’s world.

Arendt has been closely associated with this emphasis on the moral importance of critical thinking, of taking personal responsibility for one’s actions and not falling back, as many people do, on the attitudes and blind spots of one’s community. It is a powerful idea but I would point out that evil is not always banal as that adjective would not be an apt description of Hitler.

Arendt ends the book with this passage,

|

Just as you [Eichmann] supported and carried out a policy of not wanting to share the earth with the Jewish people and the people of a number of other nations—as though you and your superiors had any right to determine who should and who should not inhabit the world—we find that no one, that is, no member of the human race, can be expected to want to share the earth with you. This is the reason, and the only reason, you must hang. |

![]()

In the alternative reality of Danielle: Chronicles of a Superheroine, eleven-year-old Danielle refers to Arendt in her speech at Yad Vashem, “[Arendt] expected to descend into the bowels of human loathing [when she interviewed Eichmann]. Instead she encountered an ordinary and prosaic bureaucrat whose malevolence resulted from his failure to question the values in his midst. The Shoah resulted at least in part from this failure of critical thinking, from this ‘banality of evil,’ to quote her deservedly famous phrase.”

See entry for Adolf Eichmann for further discussion of Hannah Arendt’s views on Eichmann. See also entries for Hitler’s Final Solution and Yad Vashem.

How You Can Be a Danielle and advance critical thinking.

107. Adolf Eichmann

Adolf Eichmann in Israel on trial for genocide, war crimes, and crimes against humanity in 1961

Otto Adolf Eichmann (1906–1962), a German Nazi lieutenant colonel, was regarded as the leading architect and organizer of the Holocaust, the systematic Nazi program to exterminate the Jews during World War II. This act of genocide resulted in the killing of six million Jews, which was two thirds of the Jewish population in Europe, plus an additional five to nine million non-Jews. (See the entry for Hitler’s Final Solution for a more complete history.)

In 1941, as the Nazis began their invasion of the Soviet Union, the Nazi policy for the Jewish populations under their control began to switch from exile to extermination. To formulate a definitive plan, Reinhard Heydrich (born in 1904, assassinated in 1942 in Prague), one of the highest-ranking Nazi officials during World War II, with the titles of SS-Obersturmbannführer, General der Polizei (police), and Chief of the Reich Main Security Office, hosted a meeting called the Wannsee Conference on January 20, 1942, held in a villa in a Berlin suburb. The Nazi regime’s administrative leaders including Eichmann and Heydrich attended. Eichmann was responsible for collecting the background information for Heydrich, preparing the recommendations and writing the minutes. The decision reached at the Wannsee Conference was for the Jews of Europe to be transported to death camps, primarily in Poland. Immediately after the Wannsee Conference, Eichmann was put in charge of implementing the plan. He determined that the primary method of killing would be to gas the victims and then burn their bodies.

When the Nazis were defeated by the Allies in 1945, Eichmann escaped to Austria where he lived for five years, then emigrated to Argentina using forged papers and lived there with a false identity. In 1960, he was captured by Mossad, Israel’s intelligence service, and brought to Israel to stand trial on 15 criminal charges including war crimes and crimes against humanity.

Eichmann’s defense was the same as those used by many of the Nazi defendants accused of war crimes, such as the those who stood trial at the Nuremberg trials in 1945–1946: he had no choice, was bound by his oath of loyalty, and was just following orders. Eichmann said the decisions to annihilate the Jews was not made by him, but by Müller, Heydrich, Himmler, and ultimately Hitler (Heinrich Müller, known as Gestapo Müller, was Chief of the Gestapo, the secret police of Nazi Germany; Heinrich Himmler had the title Reichsführer and was regarded as second in command to Hitler; Adolf Hitler was the Chancellor of Germany from 1933 to 1945 and Führer, or ultimate leader, of Nazi Germany from 1934 until their defeat in 1945). “I was one of the many horses pulling the wagon,” Eichmann said in his defense, “and I couldn’t escape left or right because of the will of the driver.”

The social philosopher Hannah Arendt reported on Eichmann’s 1961 trial for The New Yorker. She noted his flat affect and apparent ordinary demeanor. He did not appear to be a monster, but rather a banal bureaucrat, hence her famous phrase “the banality of evil,” which became part of the title of her 1963 book on the trial, Eichmann in Jerusalem: A Report on the Banality of Evil.

Eichmann was found guilty of several criminal counts including war crimes and crimes against humanity and was hanged in 1962.

The true nature of Eichmann and his massive crimes has been the subject of considerable debate given its importance in understanding the nature of human evil. Several historians and observers have criticized Arendt’s characterization of Eichmann as a banal bureaucrat, saying this was historically incorrect. German journalist Elke Schmitter wrote in the German magazine Der Spiegel that Eichmann’s “performance in Jerusalem was a successful deception,” that evidence that came out after the trial showed that he was a fanatical anti-Semite. Film historian Saul Austerlitz wrote in a review of Arendt’s book in The New Republic, that her “book makes for good philosophy, but shoddy history.” University of Southampton professor of social and political philosophy David Owen wrote that “while Arendt’s thesis concerning the banality of evil is a fundamental insight for moral philosophy, she is almost certainly wrong about Eichmann.”

Arendt defended her description of Eichmann and wrote that she was aware of much of the “new” material that purportedly surfaced after the trial, such as an interview of Eichmann conducted while he was in hiding in Argentina conducted by Nazi journalist and war criminal, William Sassen. Arendt acknowledged that Eichmann was indeed a rabid anti-Semite, but that this was not inconsistent with his being “banal,” by which she meant that he was incapable of original thought, of questioning assumptions. Arendt wrote, “whether writing his memoirs in Argentina or in Jerusalem, he always sounded and spoke the same. The longer one listened to him, the more obvious it became that his inability to speak was closely connected with an inability to think, namely, to think from the standpoint of someone else.”

It is clear that Eichmann’s defense that he was just following orders was not correct. For example, in 1944, Eichmann’s superior Heinrich Himmler issued orders in 1944, hoping to obtain leniency given his perception of the inevitability of defeat, to “take good care of the Jews, act as their nursemaid.” Eichmann disobeyed these orders and instead organized Jewish death marches. According to Arendt, Eichmann “did his best to make the Final Solution final.” Eichmann was quoted near the end of the war that he “would leap laughing into the grave because the feeling that he had five million people on his conscience would be for him a source of extraordinary satisfaction.”

While in Israeli custody, he was quoted as saying, “To sum it all up, I must say that I regret nothing … We shall meet again. I have believed in God. I obeyed the laws of war and was loyal to my flag.”

![]()

In the alternative reality of Danielle: Chronicles of a Superheroine, eleven-year-old Danielle discusses Hannah Arendt, Adolf Eichmann, and the nature of evil in her speech at Yad Vashem, watched by a world audience.

See entries for Hitler’s Final Solution, Yad Vashem, and Hannah Arendt.

How You Can Be a Danielle and prevent future genocides.

108. Banality of Evil

“Banality of Evil” is a portion of the title of a 1963 book by Hannah Arendt (Eichmann in Jerusalem: A Report on the Banality of Evil). The phrase summarizes Arendt’s philosophy of the importance of critical thinking.

![]()

In the alternative reality of Danielle: Chronicles of a Superheroine, eleven-year-old Danielle cites this famous phrase in her speech at Yad Vashem, Israel’s memorial and museum about the Holocaust.

See entry for Hannah Arendt.

109. Never again

According to Raul Hilberg, the noted Holocaust historian, shortly after US forces liberated the Nazi death camp Buchenwald in April of 1945, inmates held up handmade signs with the phrase “Never again.” The truth of this report has not been confirmed, but the phrase has become associated with the Holocaust. It has also been associated with the founding of Israel as a means by which the Jewish people would assure the sentiment expressed by the phrase.

![]()

In the alternative reality of Danielle: Chronicles of a Superheroine, eleven-year-old Danielle uses these two words in her speech at Yad Vashem, Israel’s memorial and museum about the Holocaust.

See entry for Hitler’s Final Solution.

How You Can Be a Danielle and prevent future genocides.

110. Kadima

For years, Israeli politics was split between the Labor party, which favored liberal economic policies along with support for negotiation with the Palestinians for a peace treaty that would give the Palestinians control of the West Bank, and the Likud party, which in contrast, favored smaller government, lower taxes, and was skeptical of calls for Israeli to relinquish control of the West Bank.

Ariel Sharon (1928–2014), who had led the assault on the Sinai in Israel’s successful “Six Day War” in 1967 and the encirclement of the Egypt’s Third Army in the 1973 Yom Kippur war, was regarded as perhaps the greatest Israeli general, and was widely known by his nickname, “The King of Israel.” As leader of the Likud party, he became Israel’s prime minister in 2001. By 2003, Sharon became concerned that if Israel held onto the West Bank, it would lead either to Israel no longer being a Jewish state (given the size of the Arab population in the West Bank) or an undemocratic state (if Israel decided not to give citizenship and voting rights to the Palestinian population under its control). Based on this reasoning, he decided on a policy of unilateral disengagement from the Palestinian population in the Gaza Strip and West Bank.

Israeli Prime Minister Ariel Sharon in the early 2000s

In 2003, Prime Minister Sharon proposed that Israel simply vacate the Gaza Strip to be followed by a similar move in the West Bank. The proposal for leaving Gaza was adopted by the Israel government in June of 2004, approved by the Knesset (Israeli parliament) in February of 2005, and was implemented in August of 2005. Some Israeli citizens living in the Gaza Strip accepted payments from the Israeli Government to leave Gaza whereas others were forcibly evicted by the Israeli military. The Likud party under Benjamin Netanyahu criticized the withdrawal saying that the result would be Gaza becoming a threat to Israel.

Indeed, Hamas, which was recognized by the US and Europe as a terrorist organization, won the Palestinian elections in both Gaza and the West Bank in 2006, and in 2007 in a military clash with the Fatah-controlled Palestinian government took over Gaza. This led to Hamas launching missile attacks against Israel from the Gaza Strip and wars between Israel and Hamas-controlled Gaza in 2008 and 2014.

On November 21, 2005, Prime Minister Sharon resigned as head of the Likud party, formed a new party called Kadima (“Forward”) with the stated policy of unilateral Israeli disengagement from the West Bank, dissolved Parliament and called for new elections. Polls indicated that Sharon would win, but in January of 2006 he suffered a debilitating stroke that left him in a coma. Kadima won the election anyway and Ehud Olmert, who had taken over leadership of Kadima, became Prime Minister. Benjamin Netanyahu became leader of Likud.

In the 2009 elections, Kadima, under the leadership of Tzipi Livni, won the most seats (29 out of 120), but was unable to form a governing coalition and Netanyahu and the Likud party returned to power. Shortly after this, I met with Livni, then Israel’s opposition leader, in her office in 2009. She supported Sharon’s original view that Israel could not keep control of the West Bank indefinitely, but also felt that Israel had no responsible negotiating partner in the West Bank.

In 2012, Livni was defeated for continued leadership of Kadima and she formed a new center-left party called Hatnuah. With this splintering of the party, Kadima won only two seats in the Knesset in the 2013 elections, and did not participate in the 2015 elections.

![]()

In the alternative reality of Danielle: Chronicles of a Superheroine, eleven-year-old Danielle manages to politically box in both the Kadima and Likud parties with her Middle East peace proposal.

See entries for Benjamin Netanyahu and Likud.

How You Can Be a Danielle and help promote peace in the Middle East.

111. Likud

Likud, a major center-right and secular political party in Israel, was founded in 1973 by Ariel Sharon and Menachem Begin, who had been the leader of Irgun, a Zionist militant group which had fought British control in Palestine from 1944 to the establishment of the Israeli state in 1948. Likud, allied with both right-wing and liberal parties, won a landslide victory in 1977 making Begin prime minister.

In 1979, Prime Minister Begin signed a peace treaty with Egypt and shared the Nobel Peace Prize with Anwar Sadat, leading to Israel withdrawing from the Sinai desert. Israel’s peace accord with Egypt has remained in force, although that has largely been a cold peace. Although Likud has traditionally been skeptical of peace agreements with its Arab neighbors, it has been said that it is only right-wing parties such as Likud that can make such peace agreements and deliver Israeli popular support.

Begin’s government was also responsible for the successful destruction of the Osirak nuclear plant in Iraq on June 7, 1981 and is credited with having destroyed Saddam Hussein’s nascent program to build an atomic bomb.

After leading the country for much of the 1980s, Likud lost control of the government in 1992. Likud again became the ruling party under the leadership of Benjamin Netanyahu in 1996. Likud lost again in 1999 but regained control of the government under Ariel Sharon in 2001. In 2003, Prime Minister Sharon changed his position with regard to negotiating with the Arabs and advocated unilateral withdrawal from the Gaza Strip and the West Bank. This was strongly criticized by Netanyahu and while prime minister, Sharon left Likud to form the

![]()

In the alternative reality of Danielle: Chronicles of a Superheroine, eleven-year-old Danielle uses a unique strategy to promote her Middle East peace plan through the intricacies of Israeli politics.

See entries for Kadima and Benjamin Netanyahu.

How You Can Be a Danielle and help promote peace in the Middle East.

112. Palestine

Palestine may refer to any of the following: (i) a geographic region in the Middle East located between the Mediterranean Sea and the Jordan River, (ii) the geographic area currently comprised of Israel, the West Bank and the Gaza Strip, (iii) a proto-state located on the West Bank and the Gaza Strip recognized by some nations, or (iv) the Palestinian National Authority, a government established in 1994 to govern the West Bank and the Gaza Strip.

The name goes back to the Ancient Greeks, and was also used by the Roman Empire to refer to a region that includes modern Syria and Israel. Over the millennia, it has been controlled variously by the Ancient Egyptians, Persians, Romans, Arabs, Christian Crusaders, and others. The borders of the region have also changed throughout history.

After a series of military campaigns, the British were awarded a mandate to govern Palestine by the League of Nations in 1922. Non-Jewish inhabitants of the region mounted revolts in 1920, 1929, and 1936 but Britain maintained control.

At Britain’s request in 1947, after the conclusion of World War II and the Holocaust, the United Nations General Assembly adopted Resolution 181(II) recommending Palestine be partitioned into an Arab State and a Jewish state with a “Special International Regime” to govern the city of Jerusalem.

The British mandate was scheduled to terminate at midnight at the end of May 14, 1948. David Ben Gurion, the Executive Head of the World Zionist Organization, declared the establishment of the State of Israel to take effect at midnight. Eleven minutes after midnight, the Truman Administration announced de facto recognition by the United States of the new nation which was followed by official recognition by the United States on January 31, 1949. The first nation to officially recognize the State of Israel was the Soviet Union on May 17, 1948. Israel was admitted to membership of the United Nations on May 11, 1949.

On May 15, 1948, hours after the creation of the State of Israel, a coalition of Arab states declared war on Israel and attacked it in what became the first Arab-Israeli war. Many Arabs have referred to the creation of Israel as “Nakba” (the Catastrophe). The war lasted ten months with Israel capturing an additional 26 percent of the original British Mandate territory.

The subsequent period of over half a century has witnessed frequent wars and military conflicts between Israel and its Arab neighbors which continue to the present time.

![]()

In the alternative reality of Danielle: Chronicles of a Superheroine, eleven-year-old Danielle presents a unique plan for a Middle East peace settlement concerning Palestine that differs in a significant way from attempted peace plans of the past.

See entries for Hitler’s Final Solution, Benjamin Netanyahu, Kadima, and Likud.

How You Can Be a Danielle and help promote peace in the Middle East.

113. Terahertz frequency

In the 1960s, the fields of computation and communications used electronic clocks using megahertz frequency, meaning a million pulses or cycles per second. This meant that an operation such as a calculation was measured in millionths of a second.

Around the end of the twentieth century, these clocks were replaced with ones using gigahertz frequency, meaning a billion cycles per second, and calculation speeds began to be measured in billionths of a second.

Scientists are now experimenting with terahertz clocks meaning a trillion (million million) cycles per second. The promise is computation and communication speeds that are thousands of times faster than those from around 2015.

Terahertz frequency also has significance for noninvasive security scanning in that terahertz frequency electromagnetic waves can travel long distances and reveal dangers. It can see through clothing and detect concealed weapons, including non-metallic ones, detect substances such as explosives, and biological and chemical weapons. This form of detection can potentially be done from significant distances so it could be used to scan people in a crowd, such as a crowded marketplace. The resulting interference patterns could be automatically analyzed by artificial intelligence systems to detect security hazards.

It is widely believed that terahertz scanning of people is safe although some scientists have expressed concern that it could disrupt DNA replication.

![]()

In the alternative reality of Danielle: Chronicles of a Superheroine, part of eleven-year-old Danielle’s security plan for the Middle East is for Israel to use terahertz frequency scanning to prevent terrorist attacks.

How You Can Be a Danielle and become a physicist.

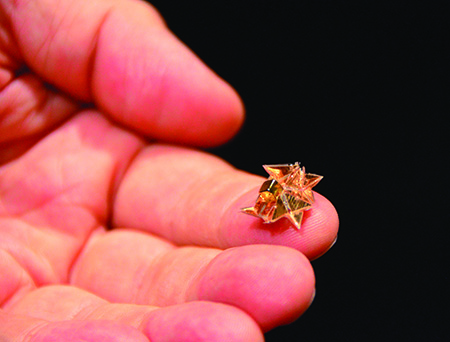

114. Microbot swarm

A microbot is a robot in which the key features are measured in microns (millionths of a meter) and the overall dimensions of the robot are measured in millimeters (thousandths of a meter). Such a robot would contain a computer, sensors to detect its environment, such as a video camera and pressure sensors, and actuators so that it can move and manipulate its surroundings.

Microbots emerged in the first decade of the twenty-first century with the advent of single chip computers and microelectronic mechanical systems (MEMS), which are mechanical systems built using chip technology. Research on microbots goes back to the 1970s.

A microbot developed at MIT and Technische Universität München in 2015

A microbot swarm is a large number of coordinated microbots intended to perform a single mission. Examples including exploring a dangerous terrain or searching for survivors in a building that is on fire or in danger of collapse.

Microbot swarms can also be used for security and military missions. For example, an enclosure containing a swarm of microbots could be dropped from a vehicle, such as a plane, which would release its cargo of microbots to perform a reconnaissance or offensive mission. The target of a microbot attack would find it difficult to defend against, given that it involves hundreds of small robots.

![]()

In the alternative reality of Danielle: Chronicles of a Superheroine, microbot swarms are part of eleven-year-old Danielle’s plan for Israeli security.

115. Terahertz scanning

Terahertz scanning is an emerging security technology using electromagnetic waves that are in the terahertz (trillions of cycles per second) frequency range.

See entry for Terahertz frequency.

116. Winston Churchill

Winston Churchill (1874–1965) is regarded by many (including this author) as the greatest European leader of the twentieth century. He led Britain to withstand an overwhelming assault from Nazi Germany and thereby enabled the Allied invasion of Normandy to retake Western Europe from Nazi control.

Churchill was a commoner, although he was the grandson of the Duke of Marlborough. His father, Lord Randolph, had been elected to the House of Commons (the Lower House of Parliament) representing the Conservative(Tory) party and was the Chancellor of the Exchequer in the 1880s. Churchill was first elected to the House of Commons in 1900 and served as a cabinet member and as president of the Board of Trade in 1908.

Winston Churchill in 1941 on a visit to Canada during World War II, portrait by Yousuf Karsh

During much of the 1930s Churchill was an isolated voice in the House of Commons warning about the need to re-arm against Germany and the danger of the Nazi party. The prevailing opinion in England, and in Parliament, during this time was one of accommodation to the rise of Fascism in Germany.

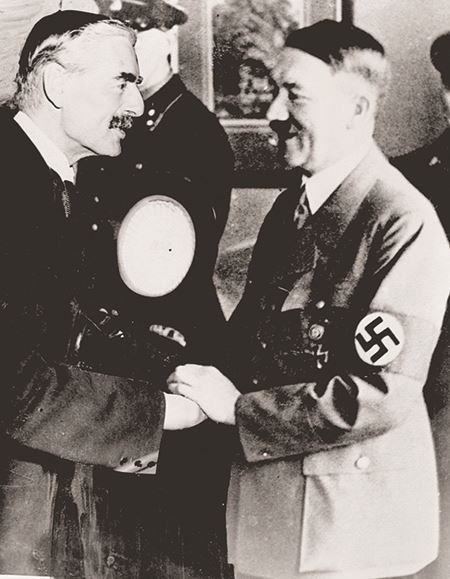

On September 30, 1938, Prime Minister Neville Chamberlain (1869–1940) met with Reichskanzler (chancellor) and Führer (absolute leader) Adolf Hitler at Hitler’s private mountain retreat in Berchtesgaden to discuss reports that Hitler intended to invade Czechoslovakia.

The resulting “Munich Agreement” provided for Germany to annex and re-arm the Sudetenland, which was part of Czechoslovakia but had been part of Germany prior to it being given to Czechoslovakia as part of the Treaty of Versailles that ended World War I. Chamberlain returned to England, and in a famous speech said, “The settlement of the Czechoslovakian problem … is, in my view, only the prelude to a larger settlement in which all Europe may find peace … a British Prime Minister has returned from Germany bringing peace with honour. I believe it is peace for our time. … Go home and get a nice quiet sleep.”

British Prime Minister Neville Chamberlain and Adolf Hitler meeting on September 30, 1938 in Germany

On October 5, 1938, Churchill gave a blistering speech in the House of Commons condemning the Munich pact, saying:

|

I will begin by saying what everybody would like to ignore or forget but which must nevertheless be stated, namely, that we have sustained a total and unmitigated defeat. … We in this country, as in other Liberal and democratic countries, have a perfect right to exalt the principle of self-determination, but it comes ill out of the mouths of those in totalitarian states who deny even the smallest element of toleration to every section and creed within their bounds. … It is the most grievous consequence of which we have yet experienced of what we have done and of what we have left undone in the last five—five years of futile good intention, five years of eager search for the line of least resistance, five years of uninterrupted retreat of British power, five years of neglect of our air defences. … [T]here can never be friendship between the British democracy and the Nazi power, that Power … which vaunts the spirit of aggression and conquest, which derives strength and perverted pleasure from persecution, and uses, as we have seen, with pitiless brutality the threat of murderous force. That Power cannot ever be the trusted friend of the British democracy. |

The “peace in our time” that Chamberlain promised was short lived as Nazi Germany went on to invade Czechoslovakia and Poland, leading to Britain declaring war on Germany on September 3, 1939 with Chamberlain still in his position as prime minister. However, this was called the “Phony War” by critics as Chamberlain did essentially nothing to prosecute the war aside from some minor naval attacks.

When Germany invaded France on May 10, 1940, Chamberlain was stunned. Confidence in him instantly evaporated and he resigned that day. Chamberlain actually advised British King George VI to recommend that Churchill be asked to organize the next government, and on that same day Churchill became prime minister of Great Britain. His first official act was to thank Chamberlain for his support.

Neville Chamberlain and the Munich Pact that he negotiated with Hitler have become known as the ultimate symbols of appeasement. Although Churchill was nearly alone in his recognition of Hitler’s true face and his condemnation of England’s lack of defiance to signs of Nazi aggressiveness in the 1930s, he quickly rallied his countrymen and women after becoming prime minister to stand up to the Nazi onslaught in Europe.

France fell quickly. On July 10, 1940, France established the Vichy Government, which relocated the administration of France from Paris to the town of Vichy with Philippe Pétain as Marshal (Leader) of France in what was widely regarded as a puppet regime controlled by the Nazis.

The French Navy was docked at its base at Mers-el-Kébir on the coast of French Algeria. Concerned that this naval force would come under control of the Nazis, and despite assurances from French leaders that this would not happen, Churchill ordered the destruction of the French Navy on July 3, 1940 resulting in the death of 1,297 French soldiers. It was the first military action by England against France in 125 years. The Mers-el-Kébir attack immediately changed world opinion regarding the resolve of Britain and in particular of Churchill to resist the Nazis. Prior to this attack, it was generally expected that England would quickly fall to the Nazis as had the rest of Europe. It was this act that caused American President Franklin Roosevelt to realize that Churchill was a man he could trust. He praised Churchill’s courageous action and lauded it as helping American security. This began a close and crucial relationship between the two leaders.

On October 28, 1941, British mathematician and computer pioneer Alan Turing (1912–1954) and his colleagues at Hut 8 of the British Government Code and Cypher School at Bletchley Park (Hut 8 being the section responsible for breaking German codes) wrote a letter to Churchill asking for greatly increased resources for their code breaking efforts. The letter bypassed Turing’s chain of command and was resented by his superiors, but according to Turing’s biographer Andrew Hodges, the letter had a dramatic and immediate effect on Churchill who wrote a letter to British General Ismay, “ACTION THIS DAY. Make sure [Turing and his colleagues] have all they want on extreme priority and report to me that this has been done.” The result was a series of code breaking computers which were among the first computers ever successfully constructed. They provided a continuous transcription of encrypted Nazi military messages to Churchill and the British leadership, including messages to the Luftwaffe (the German Air Force) and the German fleet of U-boats. Churchill had to use this information with extreme discretion lest it tip off the Germans that their Enigma code had been cracked. Turing and his fellow mathematicians assisted Churchill in this task by computing what fraction of such messages they could actually use without creating suspicion. Historians credit the code breaking efforts by Turing and his colleagues and Churchill’s support of their efforts with having enabled the British Royal Air Force to resist the far greater numerical strength of the Luftwaffe, and otherwise prevail in the Battle of Britain.

Starting with Roosevelt’s positive reaction to Churchill’s destruction of the French Navy at Mers-el-Kébir, the relationship between Churchill and Roosevelt was one of the great personal and military alliances in twentieth century history. Between 1939 and 1945 they wrote each other about 1,700 letters and had 120 days of close personal contact. Roosevelt provided extensive war supplies and financial support for England’s war against Germany via a new form of financing called Lend-Lease, in which repayment for the loans was assistance in defending the United States rather than monetary.

Starting in 1939 German forces used a military strategy which became known as Blitzkrieg (“lightning war”) which consisted of quick thrusts by tank divisions coordinated with overwhelming air power. This strategy combined with the strength of the German rearmament had quickly overrun mainland Europe, including Czechoslovakia and Poland, and on June 16, 1940, France. Churchill rallied the morale of his countrymen and women with his defiant and inspired oratory. On the eve of the French capitulation, he spoke to the British people and the world in the form of a speech to the House of Commons:

|

… [T]he Battle of France is over … the Battle of Britain is about to begin. Upon this battle depends the survival of Christian civilization. Upon it depends our own British life, and the long continuity of our institutions and our Empire. The whole fury and might of the enemy must very soon be turned on us. Hitler knows that he will have to break us in this island or lose the war. If we can stand up to him, all Europe may be freed and the life of the world may move forward into broad, sunlit uplands. But if we fail, then the whole world, including the United States, including all that we have known and cared for, will sink into the abyss of a new Dark Age made more sinister, and perhaps more protracted, by the lights of perverted science. Let us therefore brace ourselves to our duties, and so bear ourselves that if the British Empire and its Commonwealth last for a thousand years, men will still say, “This was their finest hour.” |

The Blitzkrieg, directed at England in the form of a devastating air campaign, started on September 7, 1940 and lasted nine months. All major British cities sustained enormous aerial raids including 71 major attacks on London. On September 7, 1940, the German Luftwaffe began bombing London for 57 consecutive nights. This resulted in the destruction of more than one million London homes.

Londoners spent days, and in some cases weeks, at a time in underground shelters, some fashioned from subway stations. Churchill again used his oratory to keep British morale and resolve high, and credited the shelters with limiting damage:

|

We were told also, on last Thursday week, that 251 tons of explosives were thrown upon London in a single night, that is to say, only a few tons less than the total dropped on the whole country throughout the last war. Now we know exactly what our casualties have been. On that particular Thursday night 180 persons were killed in London as a result of 251 tons of bombs. That is to say it took one ton of bombs to kill three-quarters of a person. … In [World War I] the small bombs of early patterns which were used killed ten persons for every ton discharged in the built-up areas. … That is, the mortality is now less than one-tenth of the mortality attaching to the German bombing attacks in the last war. … What is the explanation? There can only be one, namely the vastly improved methods of shelter which have been adopted. |

The Nazi focus on bombing British cities distracted them from focusing on the British war economy. This lopsided Nazi strategy, together with effective British defenses, prevented significant damage to the British economy and war production.

When Japan attacked Pearl Harbor on December 7, 1941, Churchill was quoted as saying “We have won the war!” realizing that the attack would give Roosevelt the political cover he needed to declare war against both Japan and Germany. In an address to a joint meeting of the US Congress on December 26, 1941, Churchill asked rhetorically, “What kind of people do they (the German and Japanese leadership) think we are?” Churchill became known in the American and Russian press as “the British Bulldog.”

On June 6, 1944, Allied Forces under the command of US General Dwight Eisenhower invaded Normandy deployed from bases on the Southern coast of England in the largest sea based invasion in history. Known as “D-Day,” the invasion marked a turning point in the war against Nazi Germany. Germany surrendered on May 7, 1945.